While at NFD5 I had the privilege of hearing a presentation from a new startup called Plexxi. It wasn’t the first time I heard of them. They’ve been covered on Packet Pushers and there are other ramblings of them out on the internets. There are many things that make Plexxi interesting but in this post I’d like to talk about just two of them.

The first interesting piece of the Plexxi solution is the actual hardware. The current model of the Plexxi switch (PX-S1-R) is a 1U switch advertising 1.28 Tbps of switching capacity. The switch advertises many standard features such as redundant hot swap power supplies, 32 SFP+ ports, and 2 QSPF+ ports (Sure looks like 4 QSFP+ ports to me but their doco claims otherwise – http://bit.ly/YHiJAZ). The thing you won’t see advertised on most other 1U switches is what Plexxi is calling the ‘LightRail’ optical interface. Each Plexxi switch has one of these connections. The LightRail interface itself is composed of two fiber connections, one labeled, ‘EAST’ and another labeled ‘WEST’…

If you’re thinking ahead, you might have already figured out that these ports are for interconnecting the Plexxi switches. You might have also figured out that they connect using a ring topology.

The LightRail interface uses a fairly common technology referred to generally as wave division multiplexing (WDM). Specifically, Plexxi uses CWDM, or coarse wave division multiplexing. WDM technology allows you to multiplex a range of optical signals onto a single fiber. Each optical signal uses a particular wavelength within the single physical strand to operate on. Each of these wavelengths is commonly referred to as a lambda. CWDM technology generally allows you to use 16 lambdas per physical fiber. Dense WDM (DWDM) uses tighter channel spacing and generally allows for up to 128 lambdas on a single fiber. I’m assuming Plexxi used CWDM since it’s cheaper. CWDM optics can be less precise than those required for DWDM since the channel spacing is much further apart.

In addition to the WDM technology, the light rail interface uses a different connector that what you are likely used to seeing…

This connector is referred to as Multiple-Fiber Push On or MPO. Plexxi says that this is what they use to make a physical connection between East – West switches. Specifically, they use a connector that supplies 12 core of fiber (6 pair or 6 RX and 6 TX core) between each switch. They claim that this gives each interface in the LightRail 120 Gbps of full duplex throughput.

This is where I got puzzled. If I have 6 pair of fiber, and with CWDM I can get 16 channels out of a single fiber, shouldn’t I have something like 96 ten gig Lambdas per direction on the LightRail? I would think so, but it appears that isn’t the case. From what I can tell, they are using CWDM, but only to squeeze two lambdas onto each physical core of fiber. At this point, why didn’t they just use the 24 core MPO connector?

The answer to that question lies in several places. It appears that the chief reason at this stage of the game is because they are using the Broadcom Trident 2 chipset in the switch. From what I can discern from Broadcom’s site, the Trident 2 chip is capable of handling over 100 ten GigE ports. So I can see this as a possible limitation in terms of actual port termination. Considering that the Plexxi switch has…

32 – 10 GigE front facing

8 (2(4 x 10GigE)) – QSFP (40 Gig) front facing

12 – 10 GigE east bound LightRail

12 – 10 GigE west bound LightRail

That already takes us up to 64 10 GigE ‘interfaces’. So there appears to be room there, but I’m assuming it get’s used. That breakdown points out another interesting fact. You are talking an almost 2:1 ratio of access to uplink ports. For a TOR switch, this is pretty impressive.

The other reason they used CWDM rather than additional physical ports has to do with how the Plexxi switch form a logical topology. Being able to use wavelengths rather than physical cable gives you all sorts of interesting applications (more on that below).

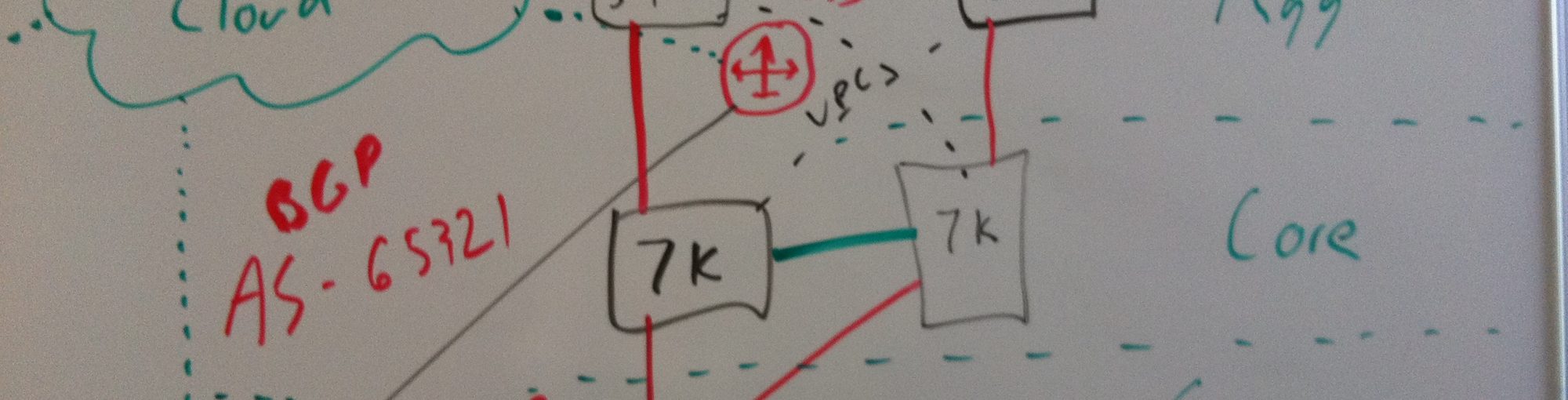

Now that we know what sort of bandwidth we have to work with on the LightRail, let’s talk about how it’s allocated. Let’s look at a base ring topology…

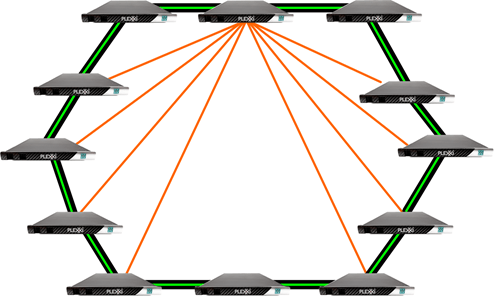

Here we have 12 Plexxi switches in a ring. The black lines indicate the physical LightRail connections between each device. Recall that we have 120 gig ,or 12 10 gig interfaces, per direction on the LightRail. The 120 gig is carved up between what Plexxi calls ‘base lanes’ and ‘express lanes’. Each switch creates four 10 gig paths to each of it’s directly connected neighbors…

Each green line above represents the base lanes, or 40 gig of bandwidth. The base lanes provide each switch with four dedicated optical paths to each of it’s directly connected neighbors. In addition, each switch then creates two 10 gig paths to it’s 4 closest east and west neighbors on the ring. For ease of visualization, I’ll only show that for one of the switches…

So if we add that all up, we should get 120 gig in each direction…

This topology is what is referred to as a chordal ring. More specifically, this base topology would be considered a 10 degree chordal ring. 10 degrees since each node has 10 connections to other nodes in the ring. Keep in mind that this is just the base topology, AKA you just booted up the Plexxi ring. This is not static and can change to better fit the applications running on top of Plexxi (more on this soon). As you can imagine, this lends itself to all kinds of interesting logical topologies that the ring can use.

So now that we know about the switch to switch connectivity, let’s talk about what happens to actual data entering a switch. As we discussed above, the switches are based off of the commodity Broadcom Trident 2 chipset. So there are really three main components to a Plexxi switch. An optical module (handles the WDM and LightRail), the Broadcom chip (for the actual ‘switch’ processing), and a crossbar fabric to connect the two. When traffic enters a switch, it can do one of three things. Either it can head to the Broadcom (access ports), optically bypass the switch, (on it’s way around the ring somewhere else), or it can be optically switched to another wavelength. The crossbar can be programmatically manipulated to send incoming traffic wherever it’s needed.

So it’s a safe assumption then that incoming wavelengths can be programmed to either terminate on the switch (end access port for host) or get optically switched outbound to another Plexxi switch. Confused? For now just keep the following fact in mind. Despite the fact that the physical cabling is a flat ring, you are really working with a ‘full’ optical mesh. In a design with 11 Plexxi switches, each switch would have direct connectivity to every other switch in the ring.

Now that we’ve talked about the hardware used, let’s talk about the second interesting piece of the Plexxi solution. The software.

What makes Plexxi a true SDN solution is the software that manages this hardware. Above all of the hardware sits Plexxi control. But before we talk about the control, we have to define the term ‘affinity’. If you’ve read any Plexxi doco up to this point, you’ve probably heard the term being used. Plexxi defines an affinity as ‘referring to the relationship between data center resources required to execute a given application workload’. So basically we are talking about all of the components required to make an app in a data center work.

Traditional data center design (for the most part) strives to make the network an even playing field for applications. Since servers (and other services) can generally be deployed anywhere within a DC (or between DCs), we need to build the network in a manner that makes it ‘fair’ for all devices. Since the network isn’t dynamic, this leads to some degree of network ‘waste’. If we want the performance between A and B, and we don’t know what switch A and B will connect to, we better make sure that all paths will be good performers. This is of course, is not always the case. Certain apps get built with the network in mind. That also implies that the network is being engineered specifically for this use case, and once built, is once again static in nature.

Plexxi aims to change that. Rather than trying to make a fair playing field for all devices all the time, Plexxi think you should do the opposite. You should pinpoint what services an application uses and logically group them together. To Plexxi, this set of resources would be considered to be an ‘affinity group’. Once we identify the affinities, we can manipulate the network dynamically to better suit given affinity groups. Plexxi likes to say that this means you are ‘starting from the top down’. Meaning you are starting with app requirements and then building a network that fits those needs. This being said, the heart of the Plexxi solution is the control which Plexxi aptly named ‘Plexxi control’. The control solution has 3 major tasks. Workload modeling, network fitting, and global network control.

The workload modeling component is what’s used to build an understanding of what’s running on the network. From the sounds of it, this piece of control soaks up information from as many sources as it can to try and build a complete picture of what’s running on the network. From this information, it can start to establish network needs and affinities as it sees them on the actual forwarding plane.

The network fitting component acts on the data which the modeling component gathered. Analyzing all of the known affinities the fitting component determines what the best network topology is to fit all of the affinities.

The global network control component seems like a fancy way to say that the Plexxi switches aren’t totally reliant on the controller. Each Plexxi switch is actually a ‘co-controller’ and is capable of making some of it’s own decisions. This allows the network to react to link failures with the controller offline.

While I haven’t seen much of Plexxi control yet, that will obviously be the key to this solution. The hardware seems interesting and I’m sure it will get more interesting as the platform takes off. While this all seems promising, I do have a few reservations about the concept in regard to the depth of the affinities. What I mean by that is, the affinity can only go so far. For instance, consider a large blade or blade/chassis server deployment. It would not be uncommon to see 8 chassis of 8 blades hanging off of a data center switch. Running on those servers we could very easily have over 1000 VMs. The network aggregation point for those servers will likely by a rather small number of 10 gig uplinks, likely less than 10. Duplicate that design a few times, tack on a self provisioning portal for VM’s that works off of open capacity in the compute pool, and I’m starting to see a problem. Applications can be deployed anywhere, and while I have no doubt that Plexxi can find some valid affinities, how useful would they be? You are dealing with possibly hundreds of affinities, and thousands of server, but only 10 uplink ports. How much can you optimize the physical network when your aggregation point for so many servers is just a few ports.

On the other hand, maybe there is something that Plexxi could do with that. Or maybe that exact solution isn’t a good fit for the product. Either way, I can certainly think of lots of other use cases for a network mesh that can dynamically change. That ,in and of itself, is definitely a huge win.

As always, looking for feedback and comments.

Pingback: NFD6 Vendor Preview: Plexxi | Keeping It Classless

WoW!. Nice article.

In your article, you have described 2:1 as uplink (Lightrail): to access. Is it not 1:2 ?. There are 24 Lambdas each 10G for uplink : (32 * 10+ 4 * 40) towards access, making it 1:2 ?

BTW, does it make sense to talk over-subscription levels in case of Plexxi ?. Normally, one would talk about over-subscription when you have standard topology or ToR, leaf, spine etc. In plexxi there is no such thing as leaf, spine and network is grown horizontally, so does it make sense to talk about over-subscription at all ?

I’d argue that oversubscription is always a valid topic for discussion regardless of the location of it occurring. You’re right, I probably should have reversed the numbering there when I stated that. I changed it in the post. At that point in the post, I was referring to the physical uplinks. I believe I talk about CWDM later on and how that impacts the uplinks.

Thanks for reading!

Thank you.

Much useful to understand the basic architecture of Plexxi.