I’ve recently started to play around with OpenStack and decided the best way to do so would be in my home lab. During my first attempt, I ran into quite a couple of hiccups that I thought were worth documenting. In this post, I want to talk about the prep work I needed to do before I began the OpenStack install.

For the initial build, I wanted something simple so I opted for a 3 node build. The logical topology looks like this…

The physical topology looks like this…

It’s one of my home lab boxes. A 1u Supermicro with 8 gigs of RAM and a 4 core Intel Xeon (X3210) processor. The hard drive is relatively tiny as well coming in at 200 gig. To run all of the OpenStack nodes on 1 server, I needed a virtualization layer so I chose ProxMox (KVM) for this.

However, running a virtualized OpenStack environment presented some interesting challenges that I didn’t fully appreciate until I was almost done with the first build…

Nested Virtualization

You’re running a virtualization platform on a virtualized platform. While this doesn’t seem like a huge deal in a home lab, your hardware (at least in my setup) had to support nested virtualization on the processor. To be more specific, your VM needs to be able to load two kernel modules, kvm and kvm_intel (or kvm_amd if that’s your processor type). In all of the VM builds I did up until this point, I found that I wasn’t able to load the proper modules…

ProxMox has a great article out there on this, but I’ll walk you through the steps I took to enable my hardware for nested virtualization.

The first thing to do is to SSH into the ProxMox host, and check to see if hardware assisted virtualization is enabled. To do that, run this command…

Note: You should first check the systems BIOS to see if Intel VT or AMD-V is disabled there.

cat /sys/module/kvm_amd/parameters/nested

In my case, that yielded this output…

You guessed it, ‘N’ means not enabled. To change this, we need to run this command…

Note: Most of these commands are the same for Intel and AMD. Just replace any instance of ‘intel’ below with ‘amd’.

echo "options kvm-intel nested=1" > /etc/modprobe.d/kvm-intel.conf

Then we need to reload the ProxMox host for the setting to take affect. Once reloaded you should be able to run the above command again and now get the following output…

It’s also important that we make sure to set the CPU ‘type’ of the VM to ‘host’ rather than the default of ‘Default (kvm64)’…

If we reboot our VM and check the kernel modules we should see that both kvm and kvm_intel are now loaded.

Once the correct modules are loaded you’ll be all set to run nested KVM/

The Network

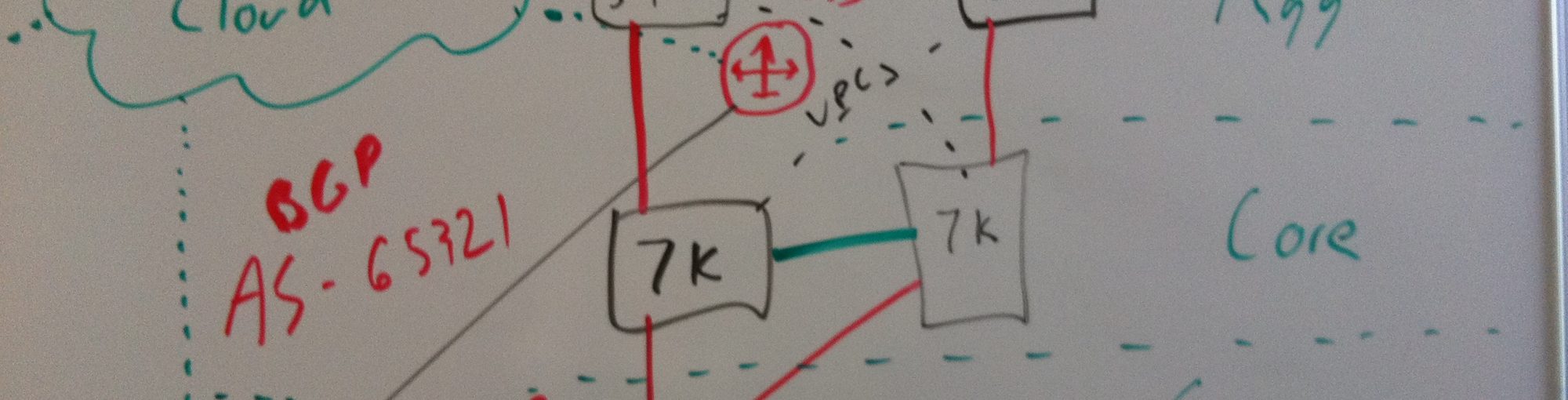

From a network perspective, we want our hosts to logically look something like this…

Nothing too crazy here, just a VM with 3 NICs. While I’m used to running all sorts of crazy network topologies virtually, this one gave me slight pause. One of the modes that OpenStack uses for getting traffic out to the physical network is dot1q (VLAN) trunking. In most virtual deployments, the hypervisor host gets a trunk port from the physical switch containing multiple VLANs. Those VLANs are then mapped to ports or port-groups which can be assigned to VMs. The net effect of this is that the VMs appear on the physical network in whatever VLAN you map them into without having to do any config on the VM OS itself. This is very much like plugging a physical server into a switch and tagging it as an access port for a particular VLAN. That model looks something like this…

This is the model I planned on using for the management and the overlay NIC on each VM. However, this same model does not apply when we start talking about our third NIC. This NIC needs to be able send traffic tagged on the VM itself. That looks more like this…

So while the first two interfaces are easy, the third interface is entirely different since what we’re really building is a trunk within a trunk. So the physical diagram would look more like this…

At first I thought as long as the VM NIC for the third interface (the trunk) was untagged, things should just work. The VM would tag the traffic, the bridge on the ProxMox host wouldn’t modify the tag, and the physical switch would receive a tagged frame. Unfortunately I didn’t have any luck with that working. Captures seemed to show that the ProxMox host was stripping the tags before forwarding them on its trunk to the physical host. Out of desperation I upgraded the ProxMox host from 3.4 to 4 and the problem magically went away. Wish I had more info on that, but that’s what fixed my issue.

So here’s what the NIC configuration for one of the VMs looks like…

I have 3 NICs defined for the VM. Net0 will be in VLAN 10 but notice that I don’t specify a VLAN tag for that interface. This is intentional in my configuration. For better or worse, I don’t have a separate management network for the ProxMox server itself. In addition, I manage the ProxMox server from the IP interface associated with the single bridge I have defined on the host (vmbr0)…

Normally, I’d tag the vmbr interface in VLAN 10 but that would imply that all VMs connected to that bridge would also be in VLAN 10 inherently. Since I don’t want to do that I need to not tag at the bridge level and tag at the VM NIC level. So back to the original question, how are these things on VLAN 10 if I’m not tagging VLAN 10? On the physical switch I configure the trunk port to have a native VLAN of 10…

What this does is tell the switch that any frames that arrive untagged should be a member of VLAN 10. So this solves my problem and frees me up to either tag on the VM NIC (as I do with net0) or tag on the VM itself (as I’ll do with net2) while having all VM interfaces a member of a single bridge.

Summary

I cant stress the importance of starting off on the right foot when building a lab like this. Mapping all of this out before you start will save you TONS of time in the long run. In the next post we’re going to start building the VMs and installing the operating systems and prerequisites. Stay tuned!

Hey Jon,

Any reason why you are using the ‘ide’ drivers for the hard drive and ‘e1000’ drivers for the virtual network ports?

Ubuntu should have the necessary kernel support for the virtio storage and network drivers. They are supposed to be a lot faster.

You should be able to change the network driver when the VM is off, but I think you have to actually edit the text file for the VM to change the storage driver. If I remember correctly the config files are here : ‘/etc/pve/qemu-server/’

Great post, I’ll have to fire up a couple VMs and follow along 🙂

Hey man! Thanks for the comments. I had just built these VMs and not got to anything besides the network config so that’s one reason. Valid points though, Im curious why virtio isnt the default in ProxMox. Seems odd now that Im thinking about it.

I had intentionally changed to E1000 drivers when I was troubleshooting trunking but they didnt make a difference. I missed the IDE driver entirely though. Good catch. Im wondering if they use IDE since it has a larger support base? I’ll try VirtIO for both and report back in the next post where I discuss building the VMs and the OS.

yeah some of the defaults are kind of odd. It might also be worth looking into the ‘iothread’ options under the harddrive and ‘multiqueues’ under network. I know I looked them both up in the past, but there didn’t seem to be strong consensus as to whether they were needed or not.

It would be nice to see Proxmox put out a performance tooling guide. Most of the options are kind of mysterious.

Pingback: OpenStack: other home lab installations – Panic1