Note: Using SaltStack to deploy Kubernetes is something that I’ve been working on considerably since this was first posted. My most recent iteration has a considerable amount of template automation built into it and is out on github – https://github.com/jonlangemak/saltstackv2

I Would love to hear feedback from anyone that tries it! New blog post coming on this soon hopefully with an end to end walk through.

The more I play around with Docker and Kubernetes the more I find myself needing to rebuild my lab. Config file changes are done all over the place, permissions change, some binaries are added or updated, and things get out of sync. I always like to figure out how things work and then rebuild ‘the right way’ to make sure I know what I’m talking about. The process of rebuilding the lab takes quite a bit of time and was generally annoying. So I was looking for a way to automate some of the rebuild. Having some previous experience with Chef, I thought I might give that a try but I never got around to it. Then one day I was browsing the Kubernetes github repo and noticed that there was already a fair amount of SaltStack files out in the repo. I had heard about SaltStack, but had no idea what it was so I thought I’d give it a try and see if it could help me with my lab rebuilds.

Make a long story short, it helps, A LOT. While I know I’ve only scratched the surface the basic SaltStack implementation I came up with saved me some serious time. Not only was the rebuild faster, ongoing config changes are much easier as well. If I need to deploy a new binary or file to a set of minions I just add it into the SaltStack config and let it take care of pushing it out to the nodes.

So in this post, I’m going to outline how I used SaltStack to rebuild the lab I’ve been using in my other Kubernetes posts. The SaltStack config I came up with works, but I’m sure it can be optimized and I hope to continue to refine it as I learn more about SaltStack. However – I’m not going to spend much time explaining what SaltStack is actually doing to make this all happen. I know, that seems counterintuitive, but in this post I want to focus on installing Salt and showing you that it can solve a problem in an awesome way. In the next post, we’ll dig into what SaltStack is actually doing on the backend as well as review the Salt configuration files that I’ll be using in this post.

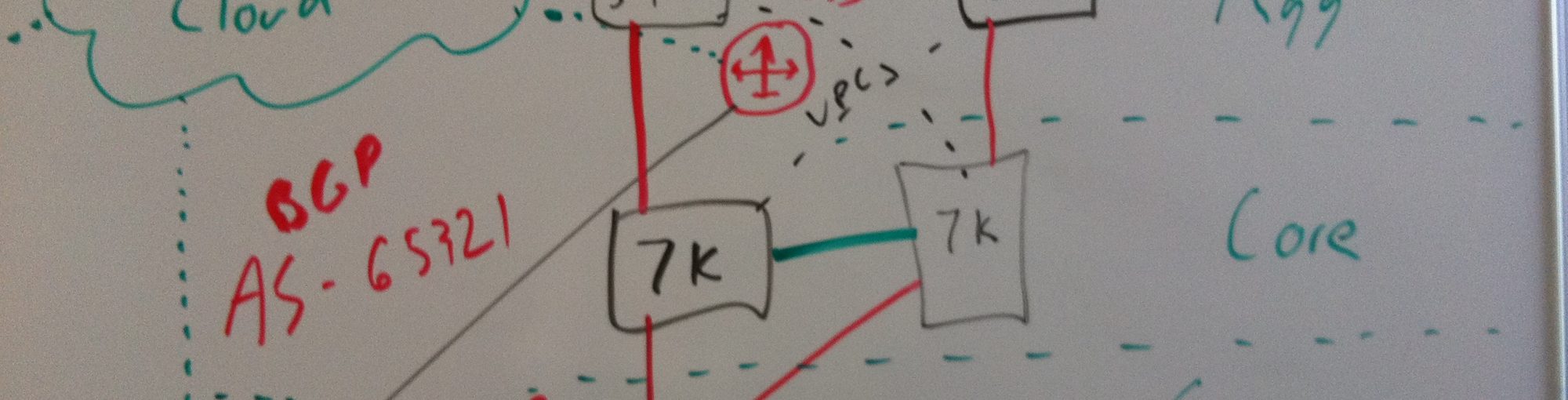

So just as a refresher, our lab looks like this…

Note: Same goes as before. The servers are already configured with a name, IP address, DNS server definition, and gateway.

We’ll be using the server kubbuild to build the Kubernetes binaries and it will also serve as the Salt master node. The remaining 5 server will all be Salt minions. Since we’re starting from scratch, the first thing we’ll do is clone the Kubernetes repo and build the binaries we’ll need to run out cluster…

Note: These are the exact same steps from the first blog we did Kubernetes 101 – The build. I’m copying them below just for reference.

Let’s start by downloading the tools we’ll need to build and distribute the code and configuring the services required…

!Disable and stop the firewalld service systemctl disable firewalld systemctl stop firewalld !Download git, docker, and Apache web server yum install -y git docker httpd !Configure docker to start on boot and start the service systemctl enable docker systemctl start docker !Configure Apache to start on boot and start the service systemctl enable httpd systemctl start httpd

So at this point, we have a fairly basic system running Apache and docker. You might be wondering why we’re running docker on this system. The kubernetes team came up with a pretty cool way to build the kubernetes binaries. Rather than having you build a system that had all of the required tools (Namely GO) the build script downloads a golang container that the build process runs inside of. Pretty cool huh? Let’s get started on the kubernetes build by copying the kubernetes repo from github…

git clone https://github.com/GoogleCloudPlatform/kubernetes.git

This will copy the entire repository down from github. If you want to put the repository somewhere specific make sure you CD over to that directory before running the git clone. Since this machine is just being used for this explicit purpose I’m ok with the repository being right in the root folder. Once the clone completes we can build the code. Like I mentioned, the kubernetes team offers several build scripts that you can use to generate the required binaries to run kubernetes. We’re going to use the ‘build-cross’ script that builds all the binaries for all of the platforms. There’s more info on github about the various build scripts here.

cd kubernetes/build ./run.sh hack/build-cross.sh

This will kick off the build process. It will make sure you have docker installed and then prompt you to download the golang container if you don’t already have it. This piece of the build can take some time. The golang container is over 400 meg and the build process itself takes some time to complete. Go get a cup of coffee and wait for this step to complete…

When the build is complete, you should get some output indicating that the build was successful.

Now this is where things are going to change from the initial build post we did. We’ll start by installing the Salt master on kubbuild. To do that, we’ll need the EPEL repository which has the YUM package for the Salt master install. Once we have the EPEL repo we can use YUM to install the salt-master package on kubbuild…

yum install -y epel-release yum install -y salt-master

Once it’s installed, we need to make a small configuration change to allow Salt to distribute files to the minions. Let’s edit the file ‘/etc/salt/master’ and look for the section called ‘File Server Settings’. Uncomment the section of the config I highlight below…

This tells Salt that the file root is the directory ‘/srv/salt’. Save the file and exit. The last thing we have to do is enable and start the salt-master service…

systemctl enable salt-master systemctl start salt-master

Now our master is up and running so the next step is to get the Salt minions online. The install process for the minions is pretty similar. Start by installing the required packages…

yum install -y epel-release yum install -y salt-minion

Once the client is installed, we need to tell the client who the Salt master is. This is done by defining the master in ‘/etc/salt/minion’. Uncomment the line that starts with ‘master:’ and set it to the name of the Salt master server. My config looks like this…

Once that’s defined, save the file and then enable and start the salt-minion service…

systemctl enable salt-minion systemctl start salt-minion

Perform the same Salt minion config on each of the 5 servers and you’re done! Salt is fully configured. To see if we were successful, let’s head back to the kubbuild server and see if the master can talk to the minions…

Awesome! This is a good sign. All of the minions are listed but before we can talk to them, the master needs to accept their keys. All Salt communication is encrypted and before the master can talk to the minions the master needs to approve of the key the minion is using. To do this, we’ll use the ‘salt-key –A’ command to accept all of the keys…

We can see that it prompts us to ensure we want to accept all of the keys and after we agree all of the minions now show up under the ‘Accepted Keys’ category. To make sure we have full connectivity we can issue a test command from the master…

Looks good! Now let’s move onto the fun part. Let’s clone my github repo onto kubbuild…

git clone https://github.com/jonlangemak/saltstack.git /srv/salt/

This should create the folder ‘salt’ under the srv folder. Since we’re going to use Salt to distribute the binaries to the nodes we need to copy the binaries to a location that Salt can find them. In this case, that would be the salt file root that we defined in the master config file. So let’s create a directory called ‘kube_binaries’ under the ‘/srv/salt’ directory and copy the binaries into that folder…

!Make the new directories mkdir /srv/salt/kube_binaries !Copy built binaries into new folder structure cd /root/kubernetes/_output/dockerized/bin/linux/amd64 cp * /srv/salt/kube_binaries

So now we have everything in place. To build the Kubernetes lab cluster tell Salt to run by issuing the following command…

salt ‘*’ state.highstate

Don’t worry about what all this means at the moment. We’ll cover all of that in the next post. For now, sit back and watch the magic happen. As Salt runs, you should start seeing messages scroll across the screen of the master indicating the current status of the run. Something that looks like this…

When it’s all done, you can scroll back up through the output and make sure nothing failed. If things look good, let’s jump over to the kubmasta server and see where things stand…

Nice! So it looks like our lab setup is working. Let’s deploy our sky-dns pod to it and see what happens…

![]()

Hard to see there but the pod loaded and is running as expected. Salt just deployed a fully working Kubernetes cluster on 5 machines! Pretty slick!

So I know at this point I’m portraying Salt as black magic that just works. I hate to end it there, but the point of this post was just to show you what Salt can do. The next post will cover the details of how Salt made this all happen. And like I said, I’ve just scratched the surface of Salt’s capabilities. I’m sure there will be more posts to come as I learn more about what Salt can do!

Pingback: Technology Short Take #50 · Scott's Weblog · The weblog of an IT pro specializing in virtualization, networking, open source, and cloud computing

nice, but with a bit help of the template engine you could clean up the repository for more scale (http://docs.saltstack.com/en/latest/ref/renderers/all/salt.renderers.jinja.html)

Thanks for the feedback! Have you checked out my newest attempt?…

https://github.com/jonlangemak/saltstackv2

I make full use of Jinja and templating with that repo. Would love to hear your feedback on it!

Tnx for your description & the salt-files!

maybe you should also include a short part about changing the key’s, since otherwise everybody is using the same server-keys probably without even knowing it :/

btw: that’s still something I struggle with myself: confidential information & GIT 🙂

again, Tnx & keep up the good work! this helped me a lot!