Some of you will recall that I had previously written a set of SaltStack states to provision a bare metal Kubernetes cluster. The idea was that you could use it to quickly deploy (and redeploy) a Kubernetes lab so you could get more familiar with the project and do some lab work on a real cluster. Kubernetes is a fast moving project and I think you’ll find that those states likely no longer work with all of the changes that have been introduced into Kubernetes. As I looked to refresh the posts I found that I was now much more comfortable with Ansible than I was with SaltStack so this time around I decided to write the automation using Ansible (I did also update the SaltStack version but I’ll be focusing on Ansible going forward).

However – before I could automate the configuration I had to remind myself how to do the install manually. To do this, I leaned heavily on Kelsey Hightower’s ‘Kubernetes the hard way‘ instructions. These are a great resource and if you haven’t installed a cluster manually before I suggest you do that before attempting to automate an install. You’ll find that the Ansible role I wrote VERY closely mimics Kelsey’s directions so if you’re looking to understand what the role does I’d suggest reading through Kelsey’s guide. A big thank you to him for publishing it!

So let’s get started…

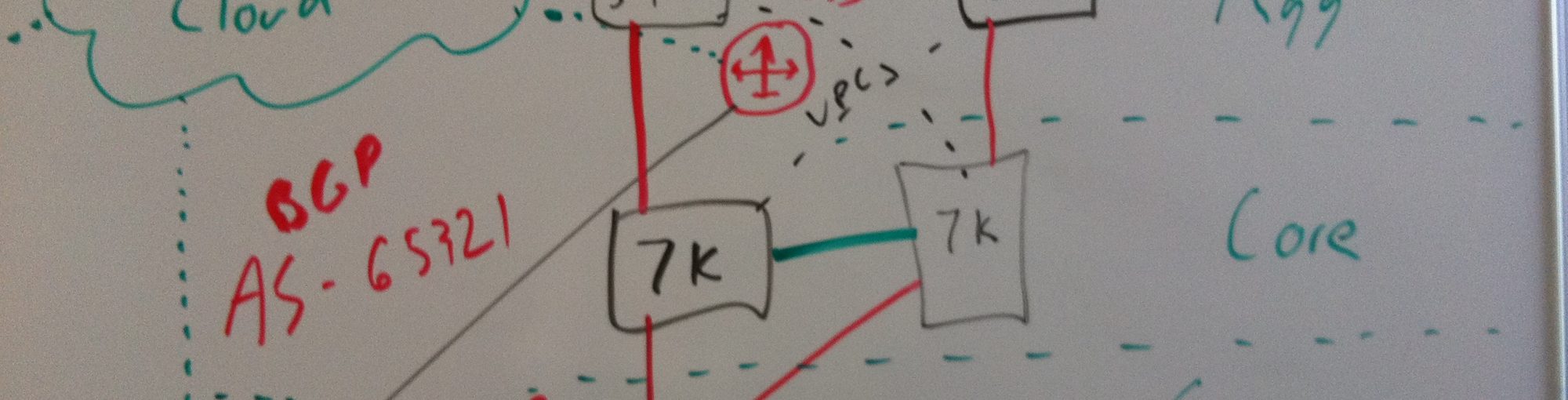

This is what my small lab looks like. A couple of brief notes on the base setup…

- All hosts are running a fresh install of Ubuntu 16.04. The only options selected for package installation were for the OpenSSH server so we could access the servers via SSH

- The servers all have static IPs as shown in the diagram and a default gateway as listed on the L3 gateway

- All servers reference a local DNS server 10.20.30.13 (not pictured) and are resolvable in the local ‘interubernet.local’ domain (ex: ubuntu-1.interubernet.local). They are also reachable via short name since the configuration also specifies a search domain (interubernet.local).

- All of these servers can reach the internet through the L3 gateway and use the aforementioned DNS server to resolve public names. This is important since the nodes will download binaries from the internet during cluster configuration.

- In my example – I’m using 5 hosts. You don’t need 5 but I think you’d want at least 3 so you could have 1 master and 2 minions but the role is configurable so you can have as many as you want

- I’ll be using the first host (ubuntu-1) as both the Kubernetes master as well as the Ansible controller. The remaining hosts will be Ansible clients and Kubernetes minions

- The servers all have a user called ‘user’ which I’ll be using throughout the configuration

With that out of the way let’s get started. The first thing we want to do is to get Ansible up and running. To do that, we’ll start on the Ansible controller (ubuntu-1) by getting SSH prepped for Ansible. The first thing we’ll do is generate an SSH key for our user (remember this is a new box, you might already have a key)…

user@ubuntu-1:~$ ssh-keygen Generating public/private rsa key pair. Enter file in which to save the key (/home/user/.ssh/id_rsa): Created directory '/home/user/.ssh'. Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /home/user/.ssh/id_rsa. Your public key has been saved in /home/user/.ssh/id_rsa.pub. The key fingerprint is: SHA256:XcVTuKKo6G/5MVfEPKAyfIPMnzjfiYrnG7hZQOn445A user@ubuntu-1 The key's randomart image is: +---[RSA 2048]----+ | . ..o.| | = . . + .+ | | o B + * o | | + * + o... | | . o o S..... | | o o o.o.o | | E +.oo= + | | o.*=o + | | .*==o. | +----[SHA256]-----+ user@ubuntu-1:~$

To do this, we use the ‘ssh-keygen’ command to create a key for the user. In my case, I just hit enter to accept the defaults and to not set a password on the key (remember – this is a lab). Next we need to copy the public key to all of the servers that the Ansible controller needs to talk to. To perform the copy we’ll use the ‘ssh-copy-id’ command to move the key to all of the hosts…

user@ubuntu-1:~$ ssh-copy-id user@ubuntu-5 /usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/home/user/.ssh/id_rsa.pub" The authenticity of host 'ubuntu-5 (192.168.50.75)' can't be established. ECDSA key fingerprint is SHA256:7wAk+T4fgt0p+jbGgtJAsnyHcp4TIofq42paTzzgFDg. Are you sure you want to continue connecting (yes/no)? yes /usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed /usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys user@ubuntu-5's password: Number of key(s) added: 1 Now try logging into the machine, with: "ssh 'user@ubuntu-5'" and check to make sure that only the key(s) you wanted were added. user@ubuntu-1:~$

Above I copied the key over for the user ‘user’ on the server ubuntu-5. You’ll need to do this for all 5 servers (including the master). Now that’s in place make sure that the keys are working by trying to SSH directly into the servers…

user@ubuntu-1:~$ ssh -l user ubuntu-5 Welcome to Ubuntu 16.04.1 LTS (GNU/Linux 4.4.0-31-generic x86_64) * Documentation: https://help.ubuntu.com * Management: https://landscape.canonical.com * Support: https://ubuntu.com/advantage 40 packages can be updated. 0 updates are security updates. *** System restart required *** Last login: Mon Jan 23 11:14:42 2017 from 10.20.30.41 user@ubuntu-5:~$

While you’re in each server make sure that Python is installed on each host. Besides the above SSH setup – having Python installed is the only other requirement for the hosts to be able to communicate and work with the Ansible controller…

user@ubuntu-5:~$ sudo apt-get install python [sudo] password for user: Reading package lists... Done Building dependency tree Reading state information... Done The following additional packages will be installed: libpython-stdlib libpython2.7-minimal libpython2.7-stdlib python-minimal python2.7 python2.7-minimal Suggested packages: python-doc python-tk python2.7-doc binutils binfmt-support The following NEW packages will be installed: libpython-stdlib libpython2.7-minimal libpython2.7-stdlib python python-minimal python2.7 python2.7-minimal 0 upgraded, 7 newly installed, 0 to remove and 39 not upgraded. Need to get 3,915 kB of archives. After this operation, 16.6 MB of additional disk space will be used. Do you want to continue? [Y/n] Y Get:1 http://us.archive.ubuntu.com/ubuntu xenial-updates/main amd64 libpython2.7-minimal amd64 2.7.12-1ubuntu0~16.04.1 [339 kB] <output removed for brevity> user@ubuntu-5:~$

In my case Python wasn’t installed (these were really stripped down OS installs so that makes sense) but there’s a good chance your servers will already have Python. Once all of the clients are tested we can move on to install Ansible on the controller node. To do this we’ll use the following commands…

sudo apt-get install software-properties-common sudo apt-add-repository ppa:ansible/ansible sudo apt-get update sudo apt-get install ansible python-pip

I wont bother showing the output since these are all pretty standard operations. Note that in addition to installing Ansible we also are installing Python PIP. Some of the Jinja templating I do with the playbook requires the Python netaddr library. After you install Ansible and PIP take care of installing the netaddr package to get that out of the way…

user@ubuntu-1:~$ pip install netaddr

Collecting netaddr

Downloading netaddr-0.7.19-py2.py3-none-any.whl (1.6MB)

100% |████████████████████████████████| 1.6MB 672kB/s

Installing collected packages: netaddr

Successfully installed netaddr

You are using pip version 8.1.1, however version 9.0.1 is available.

You should consider upgrading via the 'pip install --upgrade pip' command.

user@ubuntu-1:~$

Now we need to tell Ansible what hosts we’re working with. This is done by defining hosts in the ‘/etc/ansible/hosts’ file. The Kubernetes role I wrote expects two host groups. Once called ‘masters’ and once call ‘minions’. When you edit the host file for the first time there will likely be a series of comments with examples. To clean things up I like to delete all of the example comments and just add the two required groups. In the end my Ansible hosts file looks like this…

user@ubuntu-1:~$ sudo vi /etc/ansible/hosts # This is the default ansible 'hosts' file. # # It should live in /etc/ansible/hosts # # - Comments begin with the '#' character # - Blank lines are ignored # - Groups of hosts are delimited by [header] elements # - You can enter hostnames or ip addresses # - A hostname/ip can be a member of multiple groups [masters] ubuntu-1 [minions] ubuntu-2 ubuntu-3 ubuntu-4 ubuntu-5

You’ll note that the ‘masters’ group is plural but at this time the role only supports defining a single master.

Now that we told Ansible what hosts it should talk to we can verify that Ansible can talk to them. To do that, run this command…

user@ubuntu-1:~$ ansible masters:minions -m ping

ubuntu-3 | SUCCESS => {

"changed": false,

"ping": "pong"

}

ubuntu-5 | SUCCESS => {

"changed": false,

"ping": "pong"

}

ubuntu-1 | SUCCESS => {

"changed": false,

"ping": "pong"

}

ubuntu-2 | SUCCESS => {

"changed": false,

"ping": "pong"

}

ubuntu-4 | SUCCESS => {

"changed": false,

"ping": "pong"

}

user@ubuntu-1:~$

You should see a ‘pong’ result from each host indicating that it worked. Pretty easy right? Now we need to install the role. To do this we’ll create a new role directory called ‘kubernetes’ and then clone my repository into it like this…

user@ubuntu-1:~$ cd /etc/ansible/roles user@ubuntu-1:/etc/ansible/roles$ sudo mkdir kubernetes user@ubuntu-1:/etc/ansible/roles$ cd kubernetes/ user@ubuntu-1:/etc/ansible/roles/kubernetes$ sudo git clone https://github.com/jonlangemak/ansible_kubernetes.git . Cloning into '.'... remote: Counting objects: 92, done. remote: Total 92 (delta 0), reused 0 (delta 0), pack-reused 92 Unpacking objects: 100% (92/92), done. Checking connectivity... done. user@ubuntu-1:/etc/ansible/roles/kubernetes$

Make sure you put the ‘.’ at the end of the git command otherwise git will create a new folder in the kubernetes directory to put all of the files in. Once you’ve download the repository you need to update the variables that Ansible will use for the Kubernetes installation. To do that, you’ll need to edit roles variable file which should now be located at ‘/etc/ansible/roles/kubernetes/vars/main.yaml’. Let’s take a look at that file…

---

#Define all of your hosts here. The FQDN and IP address are important to

#get right since they will be defined in the certificates kubernetes uses

#for communication

host_roles:

- ubuntu-1:

type: master

fqdn: ubuntu-1.interubernet.local

ipaddress: 10.20.30.71

- ubuntu-2:

type: minion

fqdn: ubuntu-2.interubernet.local

ipaddress: 10.20.30.72

- ubuntu-3:

type: minion

fqdn: ubuntu-3.interubernet.local

ipaddress: 10.20.30.73

- ubuntu-4:

type: minion

fqdn: ubuntu-4.interubernet.local

ipaddress: 192.168.50.74

- ubuntu-5:

type: minion

fqdn: ubuntu-5.interubernet.local

ipaddress: 192.168.50.75

#Most of these settings are not incredibly important for your first configuration

#However make sure that the two CIDRs defined below are unique to your network as

#the cluster_node_cidr will need to be routed on your L3 gateway. Also make sure

#that the cluster_node_cidr is big as each host get's assigned a /24 out of it.

cluster_info:

cluster_name: my_cluster

cluster_domain: k8s.cluster.local

service_network_cidr: 10.11.12.0/24

cluster_node_cidr: 10.100.0.0/16

dns_service_ip: 10.11.12.254

#Again - not super critical to change this stuff but you can

pki_info:

cert_path: /var/lib/kube_certs

key_size: 2048

ca_expire: 87600h

key_expire: 87600h

cert_country: US

cert_province: MN

cert_city: Minneapolis

cert_org: Test Org

cert_email: [email protected]

cert_ou: Test

cert_name: kubernetes

#You can change these if you want but for sure leave the 'kubelet' token. You can

#change the password for it if you want but the username needs to be there.

auth_tokens:

- token1:

uid: jontoken

username: jontoken

password: jontoken

- token2:

uid: kubelet

username: kubelet

password: changeme

#I recommend leaving this alone until you get a better understanding of Kubernetes

auth_policy:

- policy1:

username: "*"

group: ""

namespace: ""

resource: ""

apigroup: ""

nonresourcepath: "*"

readonly: true

- policy2:

username: admin

group: ""

namespace: "*"

resource: "*"

apigroup: "*"

nonresourcepath: ""

readonly: false

- policy3:

username: scheduler

group: ""

namespace: "*"

resource: "*"

apigroup: "*"

nonresourcepath: ""

readonly: false

- policy4:

username: kubelet

group: ""

namespace: "*"

resource: "*"

apigroup: "*"

nonresourcepath: ""

readonly: false

- policy5:

username: ""

group: system:serviceaccounts

namespace: "*"

resource: "*"

apigroup: "*"

nonresourcepath: "*"

readonly: false

I’ve done my best to make ‘useful’ comments in here but there’s a lot more that needs to be explained (and will be in a future post) but for now you need to definitely pay attention to the following items…

- The host_roles list needs to be updated to reflect your actual hosts. You can add more or have less but the type of the host you define in this list needs to match what group its a member of in your Ansible hosts file. That is, a minion type in the var file needs to be in the minion group in the Ansible host file.

- Under cluster_info you need to make sure you pick two network that don’t overlap with your existing network.

- For service_network_cidr pick an unused /24. This wont ever get routed on your network but it should be unique.

- For cluster_node_cidr pick a large network that you aren’t using (a /16 is ideal). Kubernetes will allocate a /24 for each host out of this network to use for pods. You WILL need to route this traffic on your L3 gateway but we’ll walk through how to do that once we get the cluster up and online.

Once the vars file is updated the last thing we need to do is tell Ansible what to do with the role. To do this, we’ll build a simple playbook that looks like this…

---

- hosts:

- masters

- minions

roles:

- kubernetes

The playbook says “Run the role kubernetes on hosts in the masters and the minions group”. Save the playbook as a YAML file somewhere on the system (in my case I just saved it in ~ as k8s_install.yaml). Now all we need to do is run it! To do that run this command…

ansible-playbook k8s_install.yaml --ask-become-pass

Note the addition of the ‘–ask-become-pass’ parameter. When you run this command, Ansible will ask you for the sudo password to use on the hosts. Many of the tasks in the role require sudo access so this is required. An alternative to having to pass this parameter is to edit the sudoers file on each host and allow the user ‘user’ to perform passwordless sudo. However – using the parameter is just easier to get you up and running quickly.

Once you run the command Ansible will start doing it’s thing. The output is rather verbose and there will be lots of it…

user@ubuntu-1:~$ ansible-playbook k8s_install.yaml --ask-become-pass

SUDO password:

PLAY [masters,minions] *********************************************************

TASK [setup] *******************************************************************

ok: [ubuntu-1]

ok: [ubuntu-4]

ok: [ubuntu-5]

ok: [ubuntu-2]

ok: [ubuntu-3]

TASK [kubernetes : create base directories] ************************************

skipping: [ubuntu-2] => (item={u'path': u'/var/lib/kube_certs'})

skipping: [ubuntu-4] => (item={u'path': u'/var/lib/kube_certs'})

skipping: [ubuntu-3] => (item={u'path': u'/var/lib/kube_certs'})

skipping: [ubuntu-5] => (item={u'path': u'/var/lib/kube_certs'})

changed: [ubuntu-1] => (item={u'path': u'/var/lib/kube_certs'})

TASK [kubernetes : ca_config] **************************************************

skipping: [ubuntu-2]

skipping: [ubuntu-3]

skipping: [ubuntu-4]

skipping: [ubuntu-5]

changed: [ubuntu-1]

<rest of the output omitted>

If you encounter any failures using the role please contact me (or better yet open an issue in the repo on GitHub). Once Ansible finishes running we should be able to check the status of the cluster almost immediately…

user@ubuntu-1:~$ kubectl get componentstatus

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-0 Healthy {"health": "true"}

user@ubuntu-1:~$

user@ubuntu-1:~$ kubectl get nodes

NAME STATUS AGE

ubuntu-2 NotReady 22s

ubuntu-3 NotReady 22s

ubuntu-4 NotReady 23s

ubuntu-5 NotReady 23s

user@ubuntu-1:~$ kubectl get nodes

NAME STATUS AGE

ubuntu-2 Ready 1m

ubuntu-3 Ready 1m

ubuntu-4 Ready 1m

ubuntu-5 Ready 1m

user@ubuntu-1:~$

The component status should return a status of ‘Healthy’ for each component and the nodes should all move to a ‘Ready’ state. The nodes will take a minute or two in order to transition from ‘NotReady’ to ‘Ready’ state so be patient. Once it’s up we need to work on setting up our network routing. As I mentioned above we need to route the network Kubernetes used for the pod networks to the appropriate hosts. To find which hosts got which network we can use this command which is pointed out in the ‘Kubernetes the hard way’ documentation…

user@ubuntu-1:~$ kubectl get nodes \

> --output=jsonpath='{range .items[*]}{.status.addresses[?(@.type=="InternalIP")].address} {.spec.podCIDR} {"\n"}{end}'

10.20.30.72 10.100.2.0/24

10.20.30.73 10.100.3.0/24

192.168.50.74 10.100.1.0/24

192.168.50.75 10.100.0.0/24

user@ubuntu-1:~$

Slick – ok. So now it’s up to us to make sure that each of those /24’s gets routed to each of those hosts. On my gateway, I want the routing to look like this…

Make sure you add the routes on your L3 gateway before you move on. Once routing is in place we can deploy our first pods. The first pod we’re going to deploy is kube-dns which is used by the cluster for name resolution. The Ansible role already took care of placing the pod definition files for kube-dns on the controller for you, you just need to tell the cluster to run them…

user@ubuntu-1:~$ cd /var/lib/kubernetes/pod_defs/ user@ubuntu-1:/var/lib/kubernetes/pod_defs$ kubectl create -f kubedns-svc.yaml service "kube-dns" created user@ubuntu-1:/var/lib/kubernetes/pod_defs$ kubectl create -f kubedns.yaml deployment "kube-dns-v20" created user@ubuntu-1:/var/lib/kubernetes/pod_defs$

As you can see there is both a service and a pod definition you need to install by passing the YAML file to the kubectl command with the ‘-f’ parameter. Once you’ve done that we can check to see the status of both the service and the pod…

user@ubuntu-1:~$ kubectl --namespace=kube-system get svc NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE kube-dns 10.11.12.254 <none> 53/UDP,53/TCP 1m user@ubuntu-1:~$ user@ubuntu-1:~$ kubectl --namespace=kube-system get pods NAME READY STATUS RESTARTS AGE kube-dns-v20-1485703853-59p28 3/3 Running 0 1m kube-dns-v20-1485703853-r8wk2 3/3 Running 0 1m user@ubuntu-1:~$

If all went well you should see that each pod is running all three containers (denoted by the 3/3) and that the service is present. At this point you’ve got yourself your very own Kubernetes cluster. In the next post we’ll walk through deploying an example pod and step through how Kubernetes does networking. Stay tuned!

Pingback: KubeWeekly #79 – KubeWeekly

Pingback: Getting started with Kubernetes using Ansible | thechrisshort

Pingback: Getting started with Kubernetes using Ansible | thechrisshort

Hello

Does this set up employ High Availability?

Definitely not. The build puts a single instance of all critical components on one server. This is best for the quick stand up of a lab environment but I plan on making the role more robust in the future.

Hi Jon,

I’m not sure but… after reproducing the lab from the example on the last step: creating kube-dns’s pods, my setup stuck on ContainerCreating:

rado@ubu1:/var/lib/kubernetes/pod_defs$ kubectl –namespace=kube-system get pod

NAME READY STATUS RESTARTS AGE

kube-dns-v20-2501578361-7p3cj 0/3 ContainerCreating 0 5m

kube-dns-v20-2501578361-lfskh 0/3 ContainerCreating 0 5m

rado@ubu1:/var/lib/kubernetes/pod_defs$ kubectl describe

I solved it by applying weave to the master node:

kubectl apply -f https://git.io/weave-kube

Regards

Hi again Jon, Please delete my previous comment…

hello

is autoscale enabled by this role ?

Can you clarify what you mean by autoscale?

hello

the ansible role finished ok with no errors but i get this

ubuntu@ubuntu1:~$ kubectl get componentstatus

The connection to the server localhost:8080 was refused – did you specify the right host or port?

ubuntu@ubuntu1:~$

any tips.. ? thanks

hi

can someone please help with the above error?

Sorry for the delay – what does your configuration for kube-apiserver look like?

Thx for this awesome posts about docker and kubernetes.

I tried this at home but get a few problems to the end:

it seems to me that the minions didn’t get the correct pod networks. When executing “kubectl get nodes…” I get an error:

“Error executing template: podCIDR is not found. Printing more information for debugging the template:”

Never mind. I found the comments in your git repository. 😉

Anything I can do to make things more clear? Let me know!

Hello,I’m trying this on openstack machines (ip’s like 10.97.177….).

The installation has finished with no error but when i try to check the etcd cluster it’s down

kubectl get componentstatus

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

etcd-0 Unhealthy Get https://192.168.0.49:2379/health: x509: certificate is valid for 10.11.12.1, 127.0.0.1, 10.97.177.xx, 10.97.177.xx, 10.97.177.xx, 10.97.177.xx, 10.97.177.xx, not 192.168.0.49

scheduler Healthy ok

What i’ve changed is:

user from “user” to “ubuntu”; this is a sudoer

IP’s and machine names

on /etc/hosts on each machine i’ve added all the machines with names and the associated IP’s

I don’t understand why the etcd cluster it’s down.

Please advice.

Thank You

Hi, similar problem with my setup as well. etcd-0 status is unhealthy due to certificate invalid for 10.0.2.15

Hi Adrian, Did you get a resolution to the error?

I am facing similar issue

This is indisputably one of the best series i have found on kubernetes networking

Kudos to you sir

Great article. The ansible playbook ran without error, but my nodes are NotReady:

ansible@invader2:~$ kubectl get componentstatus

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-0 Healthy {“health”: “true”}

ansible@invader2:~$ kubectl get nodes

NAME STATUS AGE VERSION

sr2 NotReady 32m v1.7.0

sr3 NotReady 32m v1.7.0

On the nodes I see in syslog:

Feb 16 18:50:30 sr3 kubelet[2142]: W0216 18:50:30.294085 2142 cni.go:189] Unable to update cni config: No networks found in /etc/cni/net.d

Sure enough there is no /etc/cni directory. Any ideas what went wrong?